“A Picture is worth a thousand words.”

The significance of information extracted from online social media platforms for crisis response and management has been studied in many scientific papers and exemplified in real-life disaster response scenarios by many humanitarian organizations. For instance, the American Red Cross used tweets to save lives during Hurricane Sandy in March 2012. More recently, a number of emergency response organizations and government offices in Florida relied on social media to communicate and coordinate their efforts during Hurricane Irma last summer. The USGS National Earthquake Information Center (NEIC) uses Twitter data to augment their sensor-based earthquake tracking and detection systems.

Majority of these scientific studies and real-life deployments have, however, been relying almost exclusively on analyses of the textual content (e.g., posts, messages, tweets, etc.). In addition to the textual data, people post overwhelming amounts of imagery data on social networks within minutes of a disaster hit, thanks to the widespread availability of smartphones with cameras as well as the ease of capturing and sharing these images online. Contrary (or complementary) to the existing literature on using social media textual data for crisis response and management, if processed timely and effectively, social media imagery data can also enable early decision making and other humanitarian relief efforts such as gaining situational awareness. Therefore, our most recent efforts have concentrated on showing the utility of social media image analyses in a number of studies from cleaning the raw social media imagery data for optimal utilization of both machine and human computing resources in the downstream pipeline to assessing the severity of infrastructure damage during an ongoing disaster event. With this blog post, we aim to elaborate further on the potential of using social media imagery data for crisis management and response purposes by providing more example scenarios and new insights. The pictures displayed as examples below were collected from Twitter during different disaster events including earthquakes, hurricanes, etc.

Increasing situational awareness

Pictures can provide us with more information about the impact of a disaster than words can alone. Developing technology based on the state-of-the-art computer vision and machine learning techniques, one can try to understand and extract information about the scene depicted by a picture in various different settings that will collectively help to increase situational awareness.

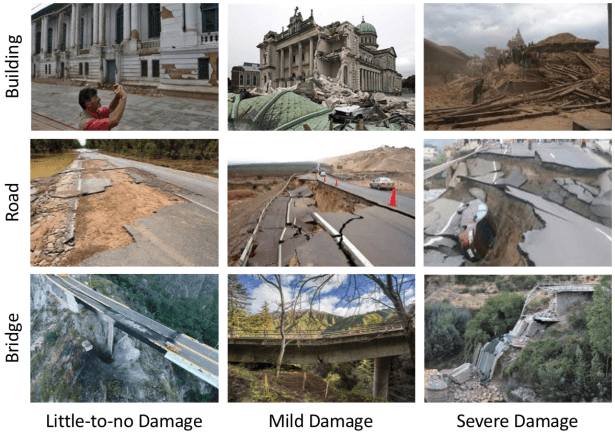

Majority of pictures shared online during disasters depict scenes of infrastructural damage incurred by the disaster as shown in Figure 1. Automatic image analysis techniques can be developed to assess the overall severity of destruction, for example, into different damage levels. Furthermore, if coupled with some ground truth financial data from other resources such as government organizations or insurance companies, one can even try to estimate the USD equivalent of the infrastructural damage in the early hours of the disaster before sending out experts to the field only when it is safe to do so.

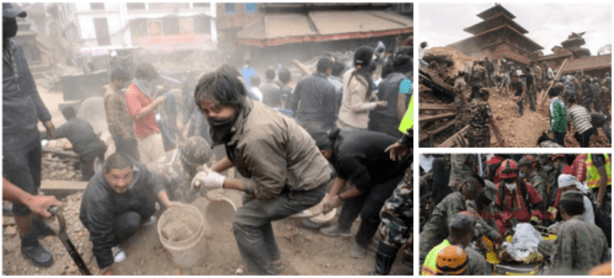

Besides the pictures showing infrastructural damage, people also post many pictures that indicate the status of affected individuals in the disaster zones as shown in Figure 2. These pictures can potentially hint at the severity and urgency of the injuries of the victims of the disaster. One can also infer from these pictures whether an injured person has already received some first aid or treatment.

Figure 3 exemplifies pictures showing snapshots from ongoing rescue operations. These pictures can be useful to understand whether the ongoing rescue efforts are run by affected individuals themselves, by volunteers, or by professional search and rescue teams, and whether there is need for more hands to help.

Similarly, pictures showing crowds of affected individuals can be used to assess the quality of the sheltering being provided (if any) as illustrated in Figure 4. Are there any affected individuals waiting out in the street with no proper tents, blankets, etc.? Or, are they at least situated under some roof with adequate treatment and beddings?

Furthermore, pictures as shown in Figure 5 can help monitor the efforts for coordination and distribution of aid to the affected individuals at disaster zones. The types of questions that can potentially be answered by analyzing such images include estimating the type and amount of aid being delivered, predicting the organizations that participate in facilitating the aid and donation efforts, or even, understanding the orderliness of distribution of aid to the affected individuals.

In summary, social media imagery data contain enough signal to enable several time-critical situational awareness tasks such as infrastructure damage assessment, injured or dead people identification, search and rescue planning, and shelter and aid needs assessment.

Actionable information extraction

Taking a step further, for instance, in the infrastructure damage assessment task, it is possible to develop more sophisticated models for detecting and localizing specific infrastructure types (e.g., buildings, road, bridges) that are critical for planning disaster relief efforts. Yet another level of improvement could then be to automatically assess from the image content the severity of damage incurred to these structures as shown in Figure 6. It would be extremely useful for humanitarian organizations to know whether a particular road or bridge is partially or completely destroyed. This type of analysis can play a crucial role in planning the logistics of the aid and relief efforts as well as in organizing the search and rescue activities.

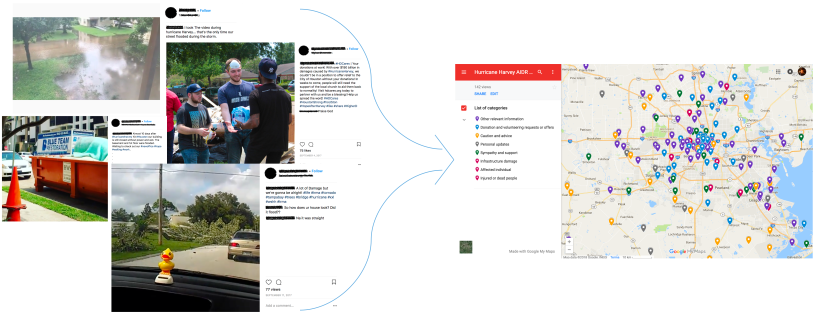

Thanks to the ever-increasing popularity of unmanned aerial vehicles, it has become almost a usual thing nowadays to find examples of aerial images captured at the disaster-struck areas in online social networks (see Figure 7). Analysis of such aerial imagery can allow for a detailed estimation of the extent of the damage incurred by the disaster. Better yet, if these images are also accompanied with geo-location information, then projecting the results of aerial image analysis onto a map would help humanitarian organizations to see the big picture from an additional perspective.

The set of examples presented in this blog post is certainly not an exhaustive list of potential applications of social media image analysis for crisis response and management. We believe as more researchers, field experts and government or humanitarian organizations start appreciating the great potential of social media image analysis for disaster response and management, the variety of use cases will increase with further discussions and future collaborations.

Computer vision perspective

Despite the astonishing progress in the traditional computer vision tasks such as object detection and fine-grained image classification, thanks to the advances in deep learning as well as the availability of large-scale datasets in the last decade or so, the state-of-the-art computer vision systems are still far away from the general visual intelligence of humans. That is, many of the example use cases described above sound reasonably straightforward for humans to perform but they cannot be easily realized as simple applications of off-the-shelf computer vision techniques at an acceptable performance for humanitarian organizations to ultimately integrate such automated solutions into their operational routine. There is definitely need for further research, for instance, to account for the overall context in an image, to explain the compositional interactions or relationships between objects in that context, and eventually, to reach to a cognitive understanding and reasoning of the visual scene using the rich content available in the image.

Localizing an intact building is relatively simple and can be successfully dealt with an off-the-shelf algorithm but how about detecting partially-damaged or completely-destroyed buildings? Picture of a damaged house due to an earthquake looks much different than a picture of a house damaged due to a wildfire, or flooding, or snow storm, etc. How can we create a universal model that captures the concept of ‘damage’ in the most general sense instead of training multiple object recognition models for each particular type of damaged house which is, by the way, very hard to scale. Similarly, many of the questions posed above require much better visual question answering systems than what is available out there today. Many of the scenarios plotted above require better image summarization or description techniques than simple image captioning. Would it not be nice to have machines that can write a paragraph from an image or even an essay from a collection of images about a disaster event?

Crisis computing team at QCRI (part of HBKU) is actively working on utilizing social media imagery and text content to develop next generation computational models and systems useful for humanitarian and development organizations such as UN OCHA and World Bank in their disaster response and management operations.

Related scientific studies:

- Muhammad Imran, Carlos Castillo, Fernando Diaz, Sarah Vieweg: Processing Social Media Messages in Mass Emergency: A Survey. ACM Computing Surveys. 47, 4, Article 67 (June 2015).

- Dat Tien Nguyen, Firoj Alam, Ferda Ofli, Muhammad Imran. Automatic Image Filtering on Social Networks Using Deep Learning and Perceptual Hashing During Crises. In Proceedings of the 14th International Conference on Information Systems for Crisis Response And Management (ISCRAM), 2017 Albi, France.

- Dat Tien Nguyen, Ferda Ofli, Muhammad Imran, Prasenjit Mitra. Damage Assessment from Social Media Imagery Data During Disasters. In Proceedings of the IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM), 2017, Sydney, Australia.